The second movement was put together very quickly using a keyboard synthesizer and a 4-channel tape recorder.

By contrast the third movement seemed to take forever to compose and then to realize. In it I was experimenting with an “adaptive canon,” in which the imitating voices were scaled in time to fit in the same sized measure as the leading voice. The leading voice of the canon would shift back and forth between 2/2 and 5/4, creating havoc for the voices that followed. I worked out the durations with a portable calculator and composed the movement during a winter residency at the Yaddo Colony in Saratoga Springs, New York.

When I came back to New York City I began the process of realizing the synthesized sound. Charles Dodge had taught me how to use MUSIC360, a synthesis program written by Barry Vercoe at MIT and based on the original synthesis software born at Bell Labs a decade earlier.

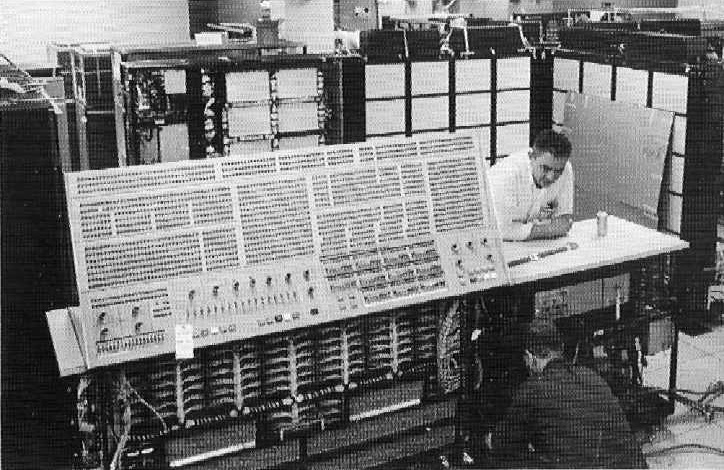

The MUSIC360 program processes two collections of information: a numerically coded “score,” and a group of “instruments” which the composer would build in software from a set of building blocks called unit generators. I would type all this data using a computer terminal in the university computer center then submit the job to the IBM mainframe for batch processing. Sound synthesis is very time-intensive, then requiring about 10 seconds of computer time for each second of finished sound, whereas most of the jobs submitted by students from other departments would complete in thousandths of a second. The mainframe would schedule jobs based on the individual’s account priority and the estimated time for the job, and often this meant that music jobs would be run by the night shift. I would come by the next morning to look over the output and try to analyze the error messages that were almost always there. Then I would edit my files and submit the job again. If the program ran to completion, I would then borrow the data type that held the results of the computation, package it with an audio tape and instruction sheet, then take the package to the Physics department office where a driver would make a daily trip to the high energy physics laboratory in Irvington, New York. The physics labs had an IBM360 computer that could be used for single-user jobs without interruption. Here an operator would convert my data tapes to 4 channel audio tape and the van driver would return the tapes on the next scheduled trip to Manhattan.

When the tape arrived on campus I could finally take it to a tape studio and listen to what had I done. In terms of time and physical resources it was quite an undertaking, and one I found to be richly rewarding.” – Maurice Wright

Quoted from 20th Century Consort

Photo Courtesy by University of Columbia

Keine Kommentare:

Kommentar veröffentlichen